EXPANDING THE WAYMO OPEN DATASET WITH INTERACTIVE SCENARIO DATA AND NEW CHALLENGES

One of the most important things an intelligent driver needs to do is to understand what the road users around it are going to do next. Is that pedestrian trying to cross the street? Is that car parallel parked, or about to pull into my lane? Will that speeding vehicle stop at the stop sign?

Accurately predicting the behavior of other road users is one of the hardest problems in autonomous driving. It also has significant safety implications – correctly evaluating another driver’s likely behavior is essential to being able to mitigate crashes.

While AV researchers have made significant progress on the motion forecasting problem in recent years, more high-quality open-source motion data can help achieve new breakthroughs throughout the industry.

Today, we’re expanding the Waymo Open Dataset with the publication of a motion dataset – which we believe to be the largest interactive dataset yet released for research into behavior prediction and motion forecasting for autonomous driving.

We’re also announcing the next round of Waymo Open Dataset Challenges – with cash awards – to help encourage new research into both perception and behavior prediction.

Finally, we’re releasing a paper describing the state-of-the-art research offboard perception method we used to annotate the motion dataset, so any research team can consider our techniques when exploring ways to create their own high-quality motion data.

The most useful data for hard motion forecasting challenges

High-quality motion data is particularly valuable because it is time-consuming and expensive to source.

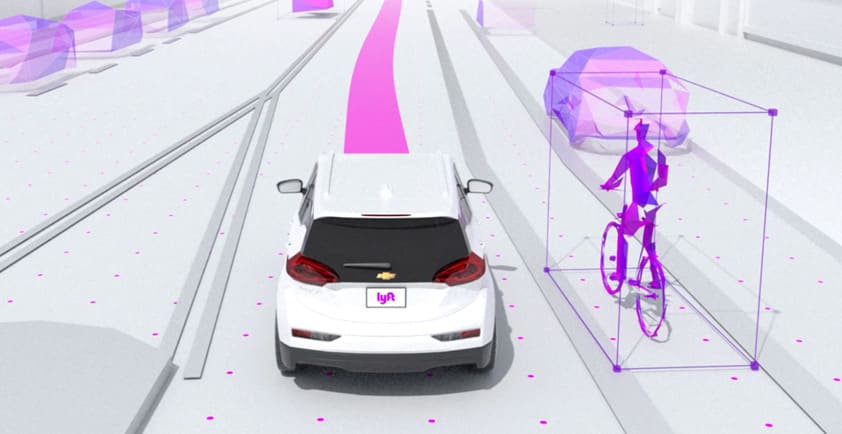

Generating a motion dataset with high-quality labels requires a sophisticated perception system that can accurately pick out agents and objects from camera and lidar data, and track their movements throughout a scene.

Interesting motion data is also hard to gather. Most day-to-day driving is uneventful – which makes for uninformative data when you are building a system to predict what could happen on the road in unusual situations. As a result, existing datasets often have a limited number of interesting interactions.

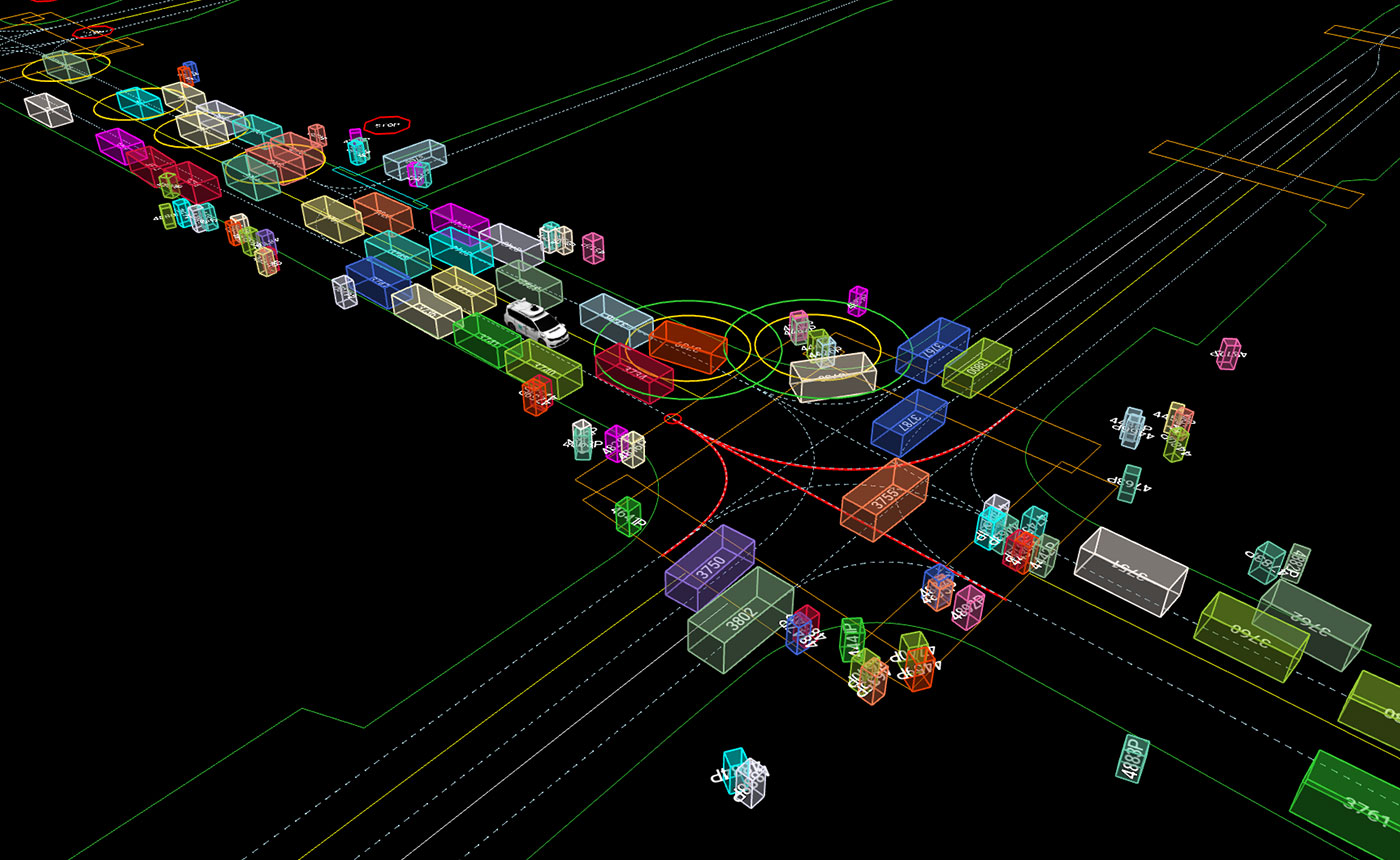

The Waymo Open Dataset seeks to address these challenges. With object trajectories and corresponding 3D maps for over 100,000 segments, each 20 seconds long and mined for interesting interactions, our new motion dataset contains more than 570 hours of unique data. We believe it’s the largest dataset of interactive behaviors yet released for autonomous driving research.

We have tried to make this data as useful as possible for researchers exploring how to create effective behavior prediction systems:

1. We have included a wide variety of data to help develop more robust, flexible motion forecasting models. The Waymo Open Dataset is one of the most geographically varied motion dataset yet released, featuring a wide variety of road types and driving conditions captured around the clock in different urban environments, including San Francisco, Phoenix, Mountain View, Los Angeles, Detroit and Seattle, to encourage models that can better generalize to new driving environments.

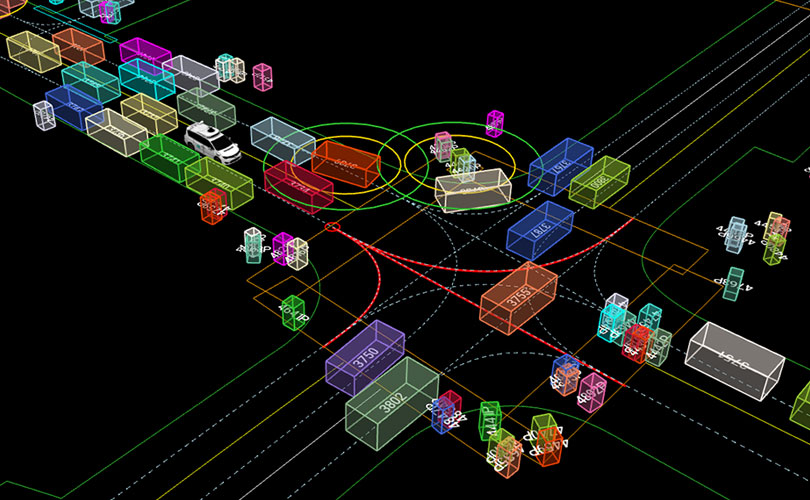

2. We have mined the data specifically to include interesting examples of agents interacting – whether it’s cyclists and vehicles sharing the roadway, cars quickly passing through a busy junction, or groups of pedestrians clustering on the sidewalk. At 20 seconds, each segment is long enough to train models that can capture complex behaviors. We have also included map information for each scene to provide semantic context that’s important for accurate predictions.

3. We used our state-of-the-art research offboard perception system to create higher-quality perception boxes than are found in other datasets. This is important because the better the perception system is at tracking the objects and agents in the motion data, the more accurate the resulting behavior prediction model will be at predicting what they are going to do.

4. We are releasing a paper describing the state-of-the-art offboard perception techniques we used to annotate this new data. We trained this system on the perception data that we already made available in the Waymo Open Dataset, so our process for creating this new motion dataset is as transparent as possible. Not only should this make it easier for researchers to scrutinize the quality of the data we’re releasing, but it may also inspire others as they consider ways to create their own high-quality motion datasets, too. This paper has been accepted at CVPR 2021.

5. We have created new benchmarks for assessing behavior prediction models. High-quality evaluation is critical to making progress in machine learning: the better the benchmark, the better the model that scores well on it. So we have released a suite of interaction metrics to allow robust benchmarking for models trained on this or any other motion dataset.

We hope this data will be useful for researchers working on behavior prediction in a wide variety of domains and industries. You can read more about the dataset on our website and in our GitHub repo, and download the data here. We have also released a Colab tutorial to provide an accessible introduction to using the dataset. We will release an accompanying paper explaining the main features of the dataset soon.

The new dataset includes many examples of interesting interactions useful for motion prediction – like agents interacting at this busy intersection in San Francisco.

Announcing four new Waymo Open Dataset challenges

Alongside our latest dataset, we are delighted to announce the next round of Challenges to encourage work on both perception and behavior prediction. The four challenges are:

1. Motion prediction challenge: Given agents' tracks for the past 1 second on a corresponding map, predict the positions of up to 8 agents for 8 seconds into the future.

2.Interaction prediction challenge: Given agents' tracks for the past 1 second on a corresponding map, predict the joint future positions of 2 interacting agents for 8 seconds into the future.

3. Real-time 3D detection: Given lidar range images and their associated camera images, produce a set of 3D upright boxes for the objects in the scene, with a latency requirement.

4. Real-time 2D detection: Given a set of camera images, produce a set of 2D boxes for the objects in the scene, with a latency requirement.

The winning team for each challenge will receive a $15,000 cash award, with second-place teams receiving $5,000 and third place $2,000.

You can find the rules for participating in the challenges here. The challenges close at 11:59pm Pacific on May 31, 2021, but the leaderboards will remain open for future submissions. We’re also inviting eligible winners to present their work at our Workshop on Autonomous Driving at CVPR, scheduled for June 20, 2021.

Since we first launched the Waymo Open Dataset in August 2019, it has had a significant impact on the research community:

> Its high-quality perception data has already spurred much academic research—as signaled by over 130 citations to our perception paper—benefitting autonomous technology teams worldwide.

> More than 150 teams participated in last year’s Challenges.

> It is also encouraging work far beyond the Challenges. Just recently, a collaboration with researchers at Google Brain led to the publication of a set of 3D scene flow annotations, taking advantage of the vast quantity and quality of annotated LiDAR data in the Waymo Open Dataset to create a set of 3D scene flow labels 1,000x larger than previous real-world datasets, to encourage further research on 3D motion estimation.

> It has also lowered barriers to entry for students and academics to experiment with new techniques without needing an autonomous vehicle to gather their own data.

We hope this expansion into motion data spurs on a new wave of research.

I know first-hand how incredibly important high-quality data is to tackling thorny AV research questions, and I’m incredibly proud of the Waymo research team’s efforts to continue evolving the dataset and making it applicable for an increasing number of research areas. We welcome community feedback on how to make our dataset even more impactful in future updates.

Author - Drago Anguelov, Distinguished Research Scientist