SIMULATION WITHOUT LIMITS: DRIVE SIM LEVELS UP WITH NVIDIA OMNIVERSE

GTC demo showcases a photoreal, physically accurate autonomous vehicle sensor simulation.

The line between the physical and virtual worlds is blurring as autonomous vehicle simulation sharpens with NVIDIA Omniverse, our photorealistic 3D simulation and collaboration platform.

During the GPU Technology Conference keynote, NVIDIA founder and CEO Jensen Huang showcased for the first time NVIDIA DRIVE Sim running on NVIDIA Omniverse. DRIVE Sim leverages the cutting-edge capabilities of the platform for end-to-end, physically accurate autonomous vehicle simulation.

Omniverse was architected from the ground up to support multi-GPU, large-scale, multisensor simulation for autonomous machines. It enables ray-traced, physically accurate, real-time sensor simulation with NVIDIA RTX technology.

The video shows a digital twin of a Mercedes-Benz EQS driving a 17-mile route around a recreated version of the NVIDIA campus in Santa Clara, Calif. It includes Highways 101 and 87 and Interstate 280, with traffic lights, on-ramps, off-ramps and merges as well as changes to the time of day, weather and traffic.

To achieve the real-world replica of the testing loop, the real environment was scanned at 5-cm accuracy and recreated in simulation. The hardware, software, sensors, car displays and human-machine interaction were all implemented in simulation in the exact same way as the real world, enabling bit- and timing-accurate simulation.

Physically Accurate Sensor Simulation

Autonomous vehicle simulation requires accurate physics and light modeling. This is especially critical for simulating sensors, which requires modeling rays beyond the visible spectrum and accurate timing between the sensor scan and environment changes.

Ray tracing is perfectly suited for this, providing realistic lighting by simulating the physical properties of light. And the Omniverse RTX renderer coupled with NVIDIA RTX GPUs enables ray tracing at real-time frame rates.

The capability to simulate light in real time has significant benefits for autonomous vehicle simulation. In the video, the vehicles show complex reflections of objects in the scene — including those not directly in the frame, just as it would in the real world. This also applies to other reflective surfaces such as wet roadways, reflective signs and buildings.

RTX also enables high-fidelity shadows. Typically in virtual environments, shadows are pre-computed or pre-baked. However, to provide a dynamic environment for simulation, pre-baking isn’t possible. RTX enables high-fidelity shadows to be computed at run-time. In the night parking example from the video, the shadows from the lights are rendered directly instead of being pre-baked. This leads to shadows that appear softer and are much more accurate.

Nighttime parking scenarios show the benefit of ray tracing for complex shadows generated by dynamic light sources.

Universal Scene Description

DRIVE Sim is based on Universal Scene Description, an open framework developed by Pixar to build and collaborate on 3D content for virtual worlds.

USD provides a high level of abstraction to describe scenes in DRIVE Sim. For instance, USD makes it easy to define the state of the vehicle (position, velocity, acceleration) and trigger changes based on its proximity to other entities such as a landmark in the scene.

Also, the framework comes with a rich toolset and is supported by most major content creation tools.

Scalability and Repeatability

Most applications for generating virtual environments are targeted to systems with one to two GPUs, such as PC games. While the timing and latency of such architectures may be good enough for consumer games, designing a repeatable simulator for autonomous vehicles requires a much higher level of precision and performance.

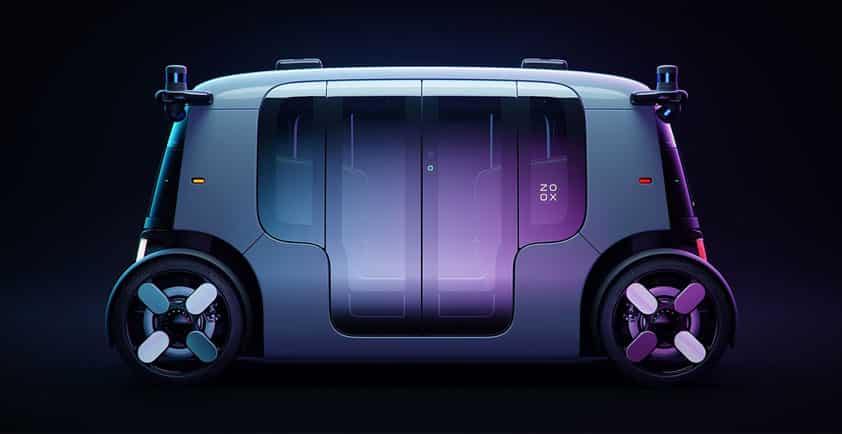

Omniverse enables DRIVE Sim to simultaneously simulate multiple cameras, radars and lidars in real time, supporting sensor configurations from Level 2 assisted driving to Level 4 and Level 5 fully autonomous driving.

Together, these new capabilities brought to life by Omniverse deliver a simulation experience that is virtually indistinguishable from reality.

Author - Matt Cragun, product manager in the Autonomous Vehicles group at NVIDIA