AUTOX UNVEILS FULL SELF-DRIVING SYSTEM POWERED BY NVIDIA DRIVE

>> Startup’s Gen5 robotaxi platform achieves more than 2,000 TOPS.

Your robotaxi is arriving soon.

Self-driving startup AutoX last week took the wraps off its “Gen5” self-driving system. The autonomous driving platform, which is specifically designed for robotaxis, uses NVIDIA DRIVE automotive-grade GPUs to reach up to 2,200 trillion operations per second (TOPS) of AI compute performance.

In January, AutoX launched a commercial robotaxi system in Shenzhen, China, becoming one of the first autonomous driving companies in the world to provide full self-driving mobility services with no safety driver behind the wheel. The Gen5 system is the next step in its global rollout of safer, more efficient autonomous transportation.

“Safety is key. We need higher processing performance for safe and scalable robotaxi operations,” said Jianxiong Xiao, founder and CEO at AutoX. “With NVIDIA DRIVE, we now have power for more redundancy in a form factor that is automotive grade and more compact.”

Zero Blind Spots

In developing safe self-driving technology, AutoX is aimed at solving the toughest environments first — specifically high-traffic, urban areas.

At the Gen5 Release Event, the company livestreamed its fully driverless robotaxi transporting a passenger through challenging narrow streets in China, called the “Urban Village.”

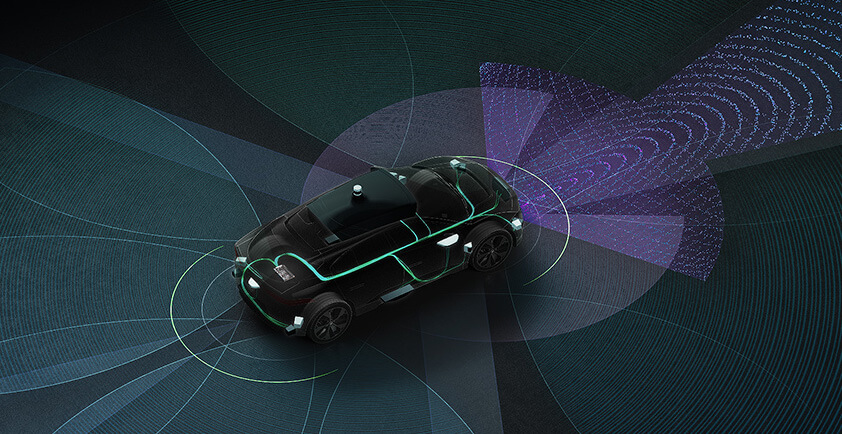

Safely navigating such chaotic streets requires sensors that can detect obstacles and other road users with the highest levels of accuracy. The Gen5 system relies on 28 automotive-grade camera sensors generating more than 200 million pixels per frame 360-degrees around the car. (For comparison, a single high-definition video frame contains about 2 million pixels.)

In addition to cameras, the robotaxi system includes six high-resolution lidar sensors that produce 15 million points per second and surround 4D radar.

At the center of the Gen5 system are two NVIDIA Ampere architecture GPUs that deliver 900 TOPS each for a truly level 4 autonomous, production platform. With this unprecedented level of AI compute at the core, Gen5 has enough performance to power ultra complex self-driving DNNs while maintaining the compute headroom for more advanced upgrades.

This capability makes it possible for the vehicles to react to high-traffic situations — like dozens of motorcycles and scooters cutting in or riding the opposite way at the same time — in real time, and continually improving, learning how to manage new scenarios as they arise.

More Stops Added

The Shenzhen fully driverless robotaxi service is just the first stop in AutoX’s roadmap to deploy a global driverless vehicle platform.

With a population of more than 12 million people and ranking in the top 50 of global cities with the heaviest traffic, Shenzhen provides an ideal setting for developing a scalable robotaxi model.

The startup plans to roll out thousands of autonomous vehicles powered by the Gen5 system over the next couple of years and expand to multiple cities around the world. AutoX is working with partners such as Stellantis and Honda to integrate their technology in a variety of vehicle platforms.

By leveraging the open, scalable NVIDIA DRIVE platform for each of these use cases, the opportunities for the road ahead are limitless.

Author - Katie Burke, NVIDIA’s Automotive Content Marketing Manager

NVIDIA TO ACQUIRE DEEPMAP, ENHANCING MAPPING SOLUTIONS FOR THE AV INDUSTRY

>> DeepMap expected to extend NVIDIA mapping products, scale worldwide map operations and expand NVIDIA's full-self driving expertise.

It’s time for autonomous vehicle developers to blaze new trails.

NVIDIA has agreed to acquire DeepMap, a startup dedicated to building high-definition maps for autonomous vehicles to navigate the world safely.

“The acquisition is an endorsement of DeepMap’s unique vision, technology and people,” said Ali Kani, vice president and general manager of Automotive at NVIDIA. “DeepMap is expected to extend our mapping products, help us scale worldwide map operations and expand our full self-driving expertise.”

“NVIDIA is an amazing, world-changing company that shares our vision to accelerate safe autonomy,” said James Wu, co-founder and CEO of DeepMap. “Joining forces with NVIDIA will allow our technology to scale more quickly and benefit more people sooner. We look forward to continuing our journey as part of the NVIDIA team.”

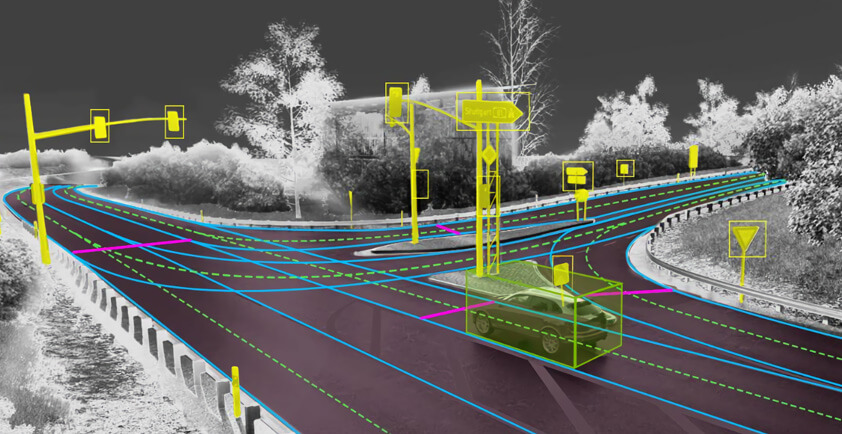

Maps that are accurate to within a few meters are good enough when providing turn-by-turn directions for humans. AVs, however, require much greater precision. They must operate with centimeter-level precision for accurate localization, the ability of an AV to locate itself in the world.

Proper localization also requires constantly updated maps. These maps must also reflect current road conditions, such as a work zone or a lane closure. These maps need to efficiently scale across AV fleets, with fast processing and minimal data storage. Finally, they must be able to function worldwide.

Experienced Mapmakers

DeepMap was founded five years ago by Wu and Mark Wheeler, veterans of Google, Apple and Baidu, among other companies. The U.S.-based company has developed a high-definition mapping solution that meets these requirements and has already been validated by the AV industry with a wide array of potential customers around the world.

The team, primarily located in the San Francisco Bay Area, has many decades of collective experience in mapping technology and developed a solution that considers autonomous vehicles both map creators and map consumers. Using crowdsourced data from vehicle sensors lets DeepMap build a high-definition map that’s continuously updated as the car drives.

Ongoing Partner Support

NVIDIA will continue working with DeepMap’s ecosystem to meet their needs, investing in new capabilities and services for new and existing partners.

NVIDIA DRIVE is a software-defined, end-to-end platform — from deep neural network training and validation in the data center to high-performance compute in the vehicle — that enables continuous improvement and deployment via over-the-air updates.

DeepMap’s technology will bolster the mapping and localization capabilities available on NVIDIA DRIVE, ensuring autonomous vehicles always know precisely where they are and where they’re going.

“We are excited to welcome the DeepMap team to NVIDIA. They have a proven track record and are entrepreneurial, nimble and engineering-focused. DeepMap meets a deep need in the industry, and together we will develop and extend these capabilities,” said Kani.

The acquisition is expected to close in the third calendar quarter of 2021, subject to regulatory approval and customary closing conditions.

Author - Danny Shapiro, NVIDIA’s Senior Director of Automotive

AEYE’S INTELLIGENT LIDAR NOW AVAILABLE ON THE NVIDIA DRIVE AUTONOMOUS VEHICLE PLATFORM

>> Adaptive, Intelligent LiDAR Augments NVIDIA’s Open Platform for L2+ to Level 5 Fully Autonomous Driving

Dublin, CA – AEye, Inc. (“AEye”), the global leader in adaptive, high-performance LiDAR solutions, today announced it is working with NVIDIA to bring its adaptive, intelligent sensing to the NVIDIA DRIVE® autonomous vehicle platform.

The NVIDIA DRIVE platform is an open, end-to-end solution for Level 2+ automated driving to Level 5 fully autonomous driving. With AEye’s intelligent, adaptive LiDAR supported on the NVIDIA DRIVE platform, autonomous vehicle developers will have access to next-generation tools to increase the saliency and quality of data collected as they build and deploy state-of-the-art ADAS and AV applications. Specifically, AEye’s SDK and Visualizer will allow developers to configure the sensor and view point clouds on the platform.

“We are pleased to offer our customers the full functionality of our sensor on the NVIDIA DRIVE platform,” said Blair LaCorte, CEO of AEye. “ADAS and AV developers wanting a best-in-class, full-stack autonomous solution now have the unique ability to use a single adaptive LiDAR platform from Level 2+ through Level 5 as they configure solutions for different levels of autonomy. We believe that intelligence will be the key to delivering new levels of safety and performance.”

“AI-driven sensing and perception are critical to solving the most challenging corner cases in automated and autonomous driving,” said Glenn Schuster, senior director of sensor ecosystems at NVIDIA. “As an NVIDIA ecosystem partner, AEye’s adaptive, intelligent-sensing capabilities complement our DRIVE platform, which enables safe AV development and deployment.”

AEye’s adaptive LiDAR takes a uniquely intelligent approach to sensing, called iDAR™ (Intelligent Detection and Ranging). Its high-performance, adaptive LiDAR is based on a bistatic architecture, which keeps the transmit and receive channels separate. As each laser pulse is transmitted, the solid-state receiver is told where and when to look for its