TAKING IT TO THE STREETS: RIDE IN AN NVIDIA SELF-DRIVING CAR WITH DRIVE LABS

Turning a traditional car into an autonomous vehicle is an enormous challenge. At NVIDIA, we’re tackling the problem by building the essential blocks of autonomous driving — categorized into perception, localization, and planning/control software — and applying high-performance compute.

To test and validate our DRIVE AV Software, we use simulation testing with our DRIVE Constellation platform and operate test cars on public roads around our headquarters in Santa Clara, Calif., and other locations around the world.

With safety-certified drivers at the wheel and and co-pilots monitoring the system, the vehicles handle highway interchanges, lane changes and other maneuvers, testing the various software components in the real world.

Seeing the World with New AIs

At the core of our perception building blocks are deep neural networks (DNNs). These algorithms are mathematical models inspired by the human brain and learn by experience.

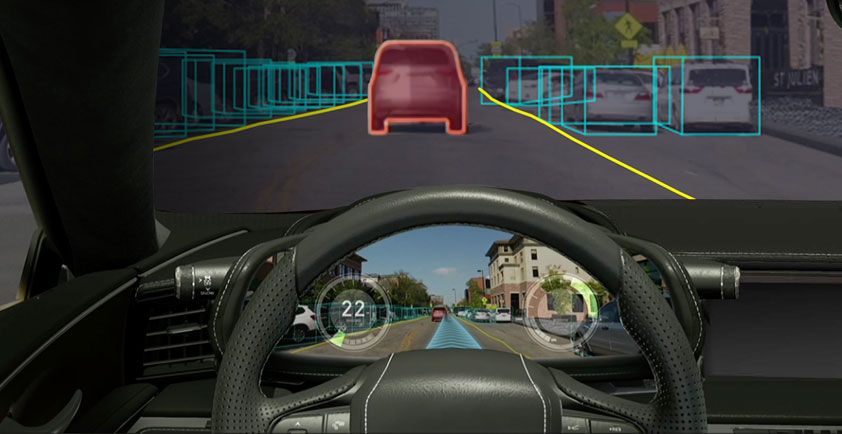

We use our DriveNet DNN to enable a data-driven understanding of obstacles (for example, cars vs. pedestrians) as well as compute distance to these obstacles. LaneNet is used to detect lane information, while an ensemble of DNNs perceives drivable paths.

WaitNet, LightNet and SignNet detect and classify wait conditions — that is, intersections, traffic lights and traffic signs, respectively. Our ClearSightNet DNN also runs in the background and assesses whether the cameras see clearly, are occluded or are blocked.

On the Map

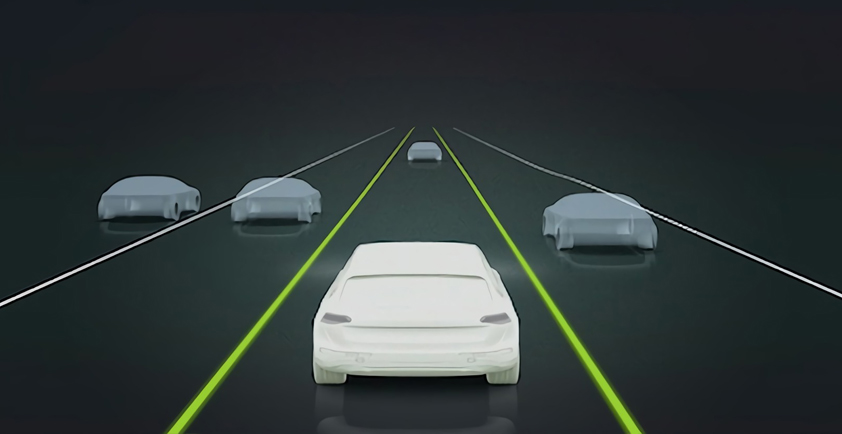

Localization is the software pillar that enables the self-driving car to know precisely where it is on the road. By taking in high-definition map information, desired driving route information, and real-time localization results, the autonomous vehicle can create an origin-to-destination lane plan for its target route.

The charted lane plan provides information on when the self-driving car needs to stay in lane, change lanes, or negotiate lane forks/splits/merges along the route. Notifications of these lane plan modes are sent to planning and control software for execution.

Localization information also enables computation of key information such as estimated-time-of-arrival (ETA) to destination, as well as live tracking of vehicle location along the created lane plan.

Where the Rubber Meets the Road

With the inputs provided by both perception and localization, the planning and control layer enables the self-driving car to physically drive itself. Planning software consumes perception and localization results to determine the physical trajectory the car needs to take to complete a particular maneuver.

For example, for the autonomous lane changes shown in the video above, planning software first performs a lane change safety check using surround camera and radar perception to ensure the intended maneuver can be executed.

Next, it computes the longitudinal speed profile as well as the lateral path plan needed to move from the center of the current lane to the center of the target lane. Control software then issues the acceleration/deceleration and left/right steering commands to execute the lane change plan.

The engine that runs these components is the high-performance, energy-efficient NVIDIA DRIVE AGX platform. DRIVE AGX makes it possible to simultaneously run feature-rich, 360-degree surround perception, localization, and planning and control software in real time.

Together, these elements create diversity and redundancy to enable safe autonomous driving.

To learn more about the software functionality we’re building, check out the rest of our DRIVE Labs series.

Author: Neda Cvijetic - Senior Manager, Autonomous Vehicles at NVIDIA