AT&T FOUNDRY - LIVE HD-3D MAPPING FOR VEHICLE AUTOMATION

As the prevalence of autonomous vehicles (AV) and advanced driver-assistance systems (ADAS) continues to grow, it becomes increasingly possible, efficient, and even necessary for cars to act as cooperative fleets rather than independent data centers on wheels. Factors such as safety, traffic flow coordination, regulatory compliance, and cost reduction will require these vehicles to combine their own powerful on-board intelligence with data and decision-support systems that exist elsewhere. Vehicles must always be able to perform elementary operating functions regardless of network connectivity. However, the enhanced situational awareness afforded by connected fleets, particularly in dense traffic areas, means that automated vehicles are unlikely to develop as solely self-contained systems.

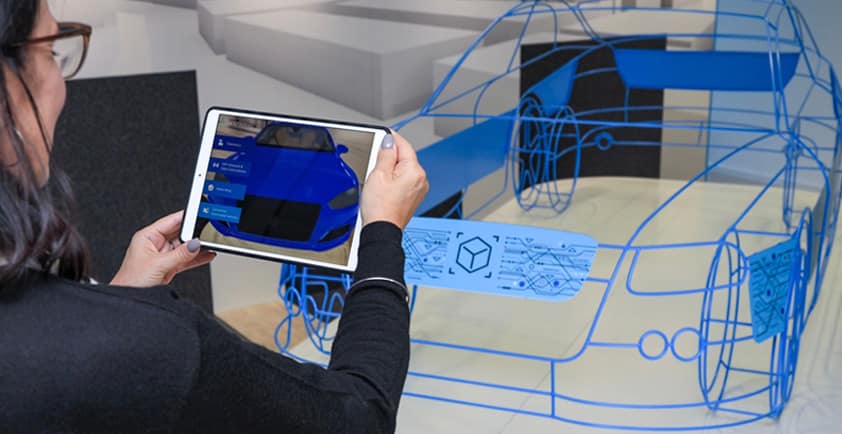

Collaborative mapping systems are a prime example of information sharing between vehicles. Unlike the flat maps employed by human drivers today, maps for AV and ADAS are complete three-dimensional (3D) recreations of the physical roadside environment, requiring high-definition (HD) precision often down to the centimeter scale. Access to this information can effectively allow a vehicle to “see around corners” and anticipate its future environment well beyond the 100- to 200-meter range typical of current AV sensors. Development of a scalable and highly accurate mapping system is critical to the widespread deployment of AV and ADAS technology in the real world. Thus, it’s not surprising that many of the same companies at the forefront of AV technology have also taken leading positions in the race toward an effective mapping system.

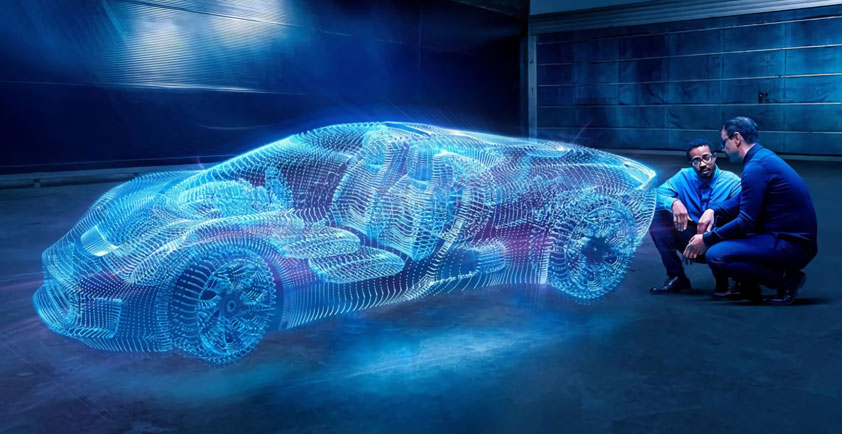

To maintain their accuracy, HD/3D maps must also be “live” – i.e., continuously updated. Some companies are developing end-to-end frameworks that efficiently manage a dynamic representation of environments by “crowd-sourcing” data from existing AV and ADAS operating on the road or, occasionally, those deployed to fly overhead. The same sensors (cameras, lidar, radar, etc.) used to enable autonomous perception can also be used to update a shared mapping system. The maps provide valuable context to the onboard sensors, and the discrepancies detected by these sensors deliver live updates to the centralized resource. This framework allows all connected vehicles to increase their safety and efficiency by operating as a cooperative fleet, sensing the world in a distributed manner from various points of view.

Hosting the HD/3D data and live update functions at the 5G network edge has the potential to improve the responsiveness and efficiency of these collaborative mapping systems. Mapping information and road conditions are geographically specific by nature, and thus well suited for processing at the edge. Furthermore, as the sophistication of these maps and the number of vehicles utilizing them continue to grow, the data volume and real-time processing requirements may outpace the capabilities of a centralized cloud framework. Integration with 5G will also provide positioning information that is much more precise than traditional GPS while being much less computationally expensive to the vehicle’s onboard system. By taking advantage of real-time connections to incorporate external decision support into mapping and driving algorithms, the 5G edge could become the catalyst for the widespread deployment of AV and ADAS technology.

Developing a framework to optimally integrate 5G and edge capabilities into a live HD/3D mapping system will require input and collaboration across the ecosystem. The EC Zone Program aims to bring together mapping software companies, OEMs, and other infrastructure players to determine how to effectively deploy and synchronize functions across each tier of the computing framework (e.g., vehicle, edge, and centralized) using our next-generation network. Together, we hope to improve the traffic flow and safety of our future roads.